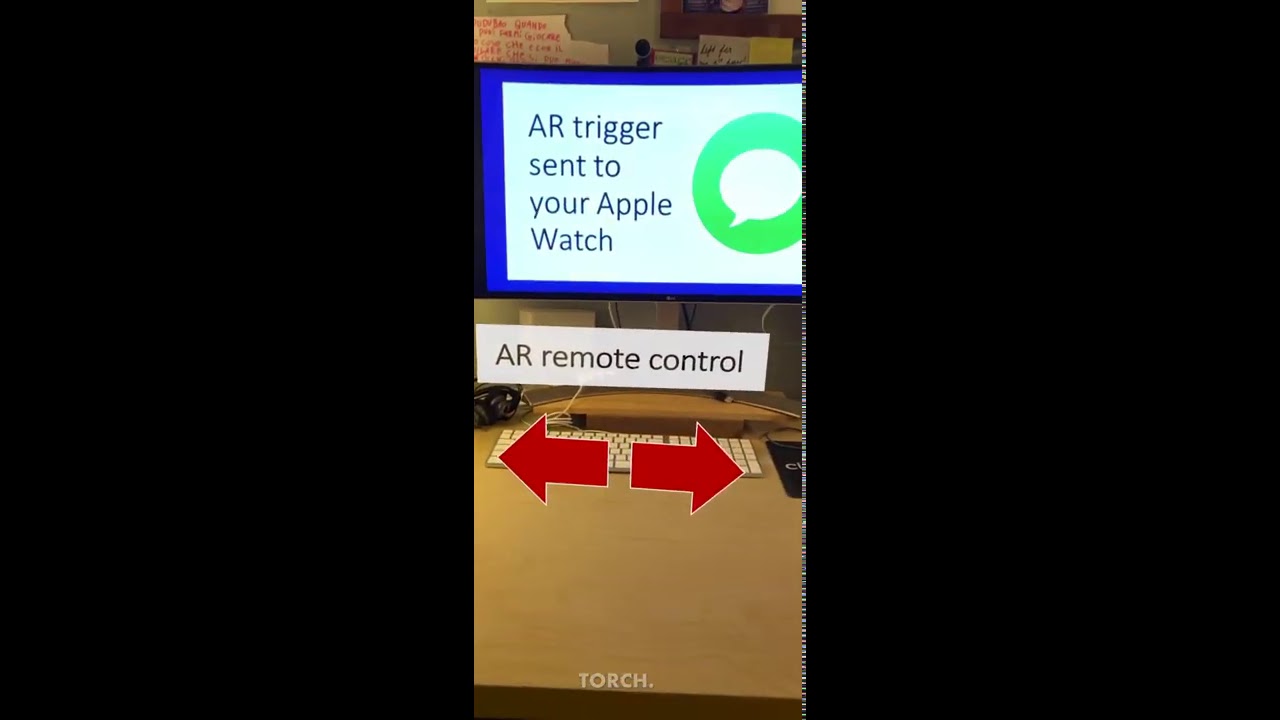

I’d like to share my latest tech mash-up, which is a combination of Apple Watch, Augmented Reality and Intuiface. Here is how it works: you receive a text message with an image tracker. Using a special AR app running on your phone, you ‘scan’ the image on your watch, which generates a control panel floating in thin air. This AR panel has virtual buttons; when you engage with them, you are able to change content on a remote Intuiface XP.

Here are the steps I followed:

- First, I created the Intuiface assets. In my case, I used PowerPoint to draft a few slides, exported them as PNG and imported them into an asset flow

- Then I added the Local Network Triggers IA and the respective actions that will interpret the network messages from the AR app. In my case, I only wanted to go back and forth with the carousel (download my XP here) but you could play videos, change images and more

- I installed the Torch AR app on my iPhone, which is a drag & drop authoring environment for AR experiences. No coding required, although there is a learning curve

- I imported the image assets that compose my AR control panel (a text header and two arrows). Torch is sort like Intuiface, you have triggers and actions. I assigned to each arrow a remote command using their API call. Here is what the right arrow linked to:

http://<IP address of the PC running Intuiface>:8000/intuiface/sendMessage?message=AR¶meter1=Next - In Torch I also created an image tracker interaction: when the phone camera detected the image, it’d spawn the AR control panel. I could have printed the tracker image, but using the Apple Watch was an irresistible scenario

- My iPhone and PC had to be on the same network. I had to change my network profile settings to Private and disable the firewall so the network message could reach Intuiface

And that’s it. Admittedly, it took me several hours to patch all the pieces together, but it was mostly the AR and network parts that consumed most of my time.

What use cases do you see for AR and Intuiface?