This is follow up to my Deloitte AI Gallery Interactive Walkthrough post. With the help of our VR designer Michael Gelon, I’d like to elaborate how we were able to include the concept of a plexus in Intuiface. For context, I’m including the screencast of the specific XP (skip to 1:01 min):

We were asked to display three categories with five sub-points each as an evolving, 3D plexus (‘nodes connected with lines graphic’). In the interest of time and resources, we opted for a pre-rendered offline graphic as opposed to a programmatic solution.

The idea was to use looping & transition videos, triggered by invisible buttons in Intuiface. After Effects was the right tool for these motion graphics, and Rowbyte’s Plexus 3 plugin sped up the process.

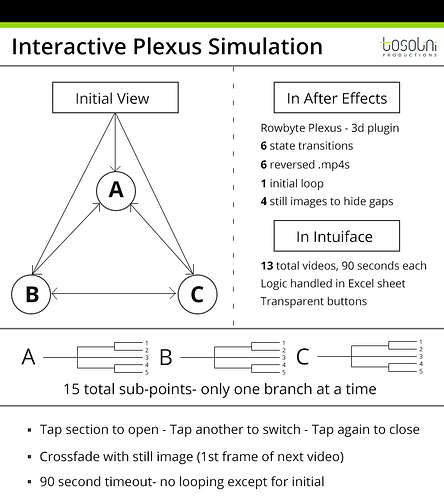

Logic

Initial loop state, all choices “closed.” Open states for each choice, which animations out five data points. Opening a second state closes the previous state.

The user scrolls down the screen and arrives at the plexus section. An Intuiface background video starts that loops the initial choice triangle with motion prompts and subtle node movement. In the graphic, the labeled nodes are locked in place while the background nodes morph around. This reduces obvious frame jumping when the video swaps, because we cannot predict when in the animation the user will signal to switch sections.

The user taps a choice. This starts a logic chain documented in an Excel spreadsheet.

It places a background still (of the first frame of the next video) behind the current video, fades out the current video, fades in the next, and moves the button set to updated positions. The background image mitigates the potential flash effect of swapping videos, as Intuiface needs 0.3 seconds to filter the results of a new Excel query.

We used dynamic camera movements in After Effects to emphasize the “3D-ness” of the plexus, so the buttons are in slightly different positions for each state. The transparent buttons move accordingly. This first swap is “Intro > Category”.

If the users taps the same category again, another, reversed export of the same video is used to animate back into the “intro” loop. All of the end points of the videos are designed to lead into each other. If the user taps another category, the swap is “Category > Category”. This was achieved by chaining transition videos in After Effects. The sequence for this type of swap is “Reverse to default > Forward to new > new looping.” Each video is set to last 90 seconds, the time-out length for the screen. Baking the loops into the transitions allowed us to avoid dealing with more seams when switching videos.

Challenges

- File size: We used 13 90-seconds videos for this section, which totaled to 1.3 GB of data. If Intuiface allowed for reverse videos, this could be cut in half.

- Seams: The big challenge with the “fake” plexus implementation was having it be dynamic with motion while also allowing users to transition states at any point. Main elements maintain a steady position, and the videos fade into and out of a background image used to negate any “flashing” in the blank frame as a video loads. The fade helps ease any jarring position changes of the background nodes/lines.

- After Effects project: it took a few tries to get the organization of elements clean in the After Effects project. Linking up the different animations required a clear project structure

Overall, this approach allows the illusion of a computational, 3D plexus that responds to touch. A programmatic approach would be more seamless and interactive, but would miss out on some of the more dynamic motion graphic elements. The videos were quicker to produce than designing a custom system, but are slower to edit than a finished programmatic system.

Have you tried to include a plexus in your Intuiface projects? If so, I’d love to learn if you found alternative solutions.