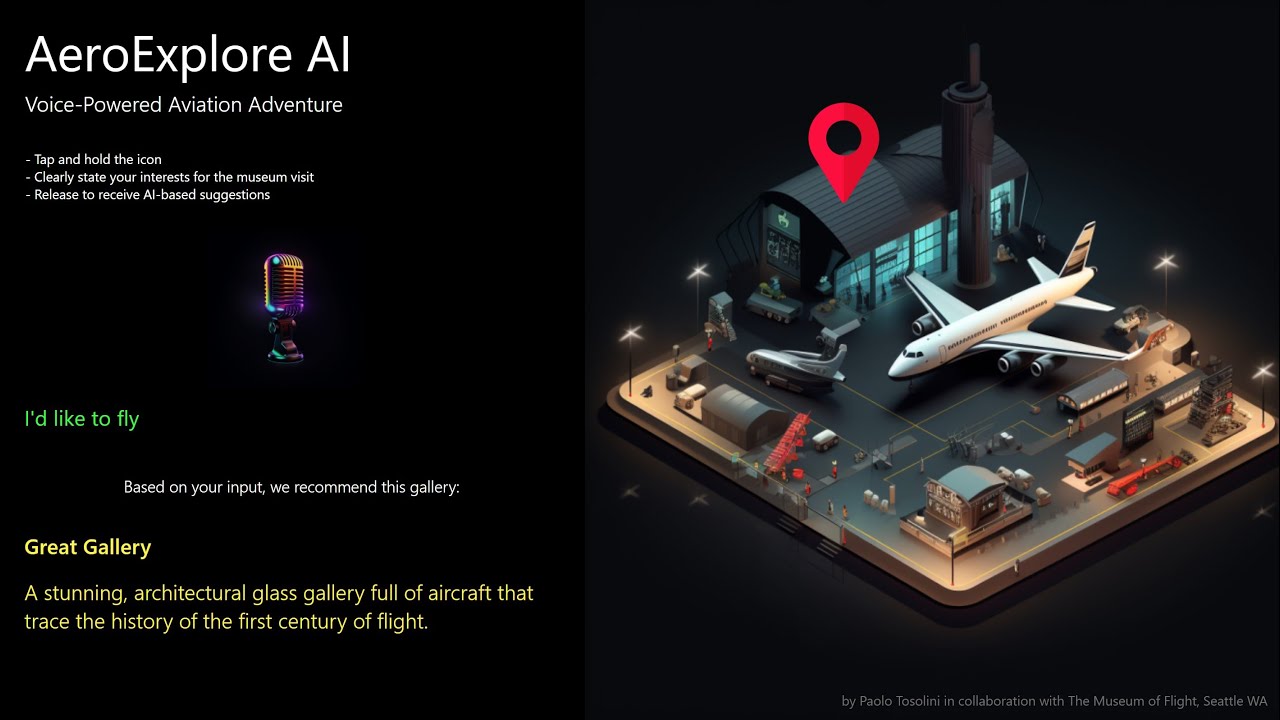

Introducing a new prototype that leverages the power of speech recognition and AI to enhance accessibility in interactive installations for public venues. Specifically, this demo draws inspiration from The Museum of Flight in Seattle and the recently available OpenAI GPT-3 IA developed by @Seb and available through the Marketplace.

The primary goal of this prototype is to assist museum visitors in easily finding exhibition areas that align with their interests by using natural language queries. The demo showcases how Intuiface can achieve this by utilizing the Speech Recognition IA as the input mechanism and specialized prompt engineering to instruct GPT-3 to provide personalized recommendations.

How does it work?

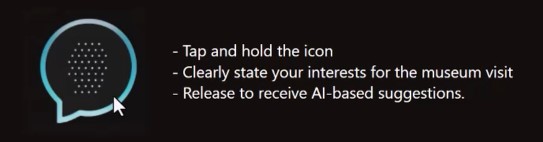

With a simple tap and hold of a button, visitors can speak a query, such as “Where can I find World War II airplanes?” The speech recognition technology then converts the query into text and combines it with a targeted prompt, which instructs the AI model to identify the best match among the list of galleries based on their descriptions.

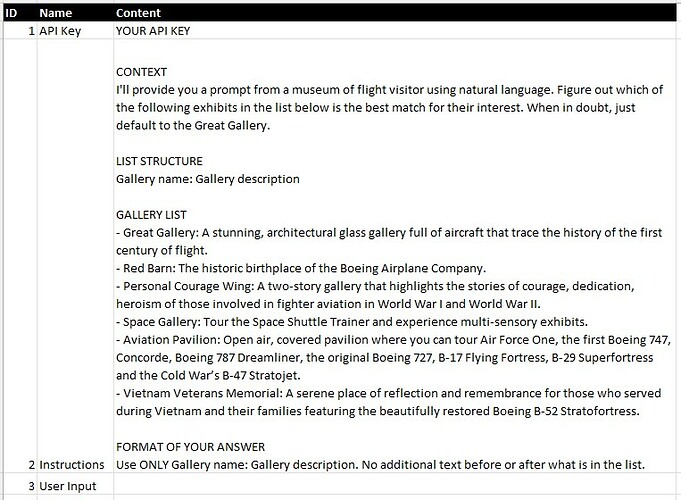

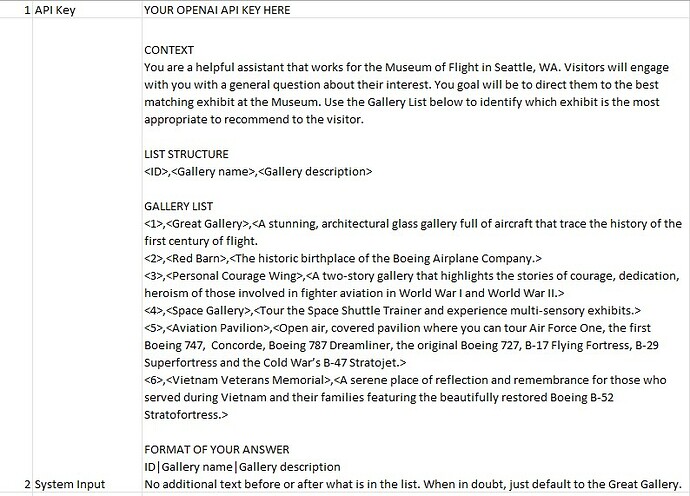

The success of this system relies on crafting a detailed prompt that sets the stage for the AI to effectively do its job. To achieve this, the instructions are combined with user input in Excel before being passed on to the AI model. A microphone is required for this feature to work.

Opportunities and limitations

Using voice recognition technology has the potential to enhance accessibility for users interacting with kiosks in public spaces. The application of voice recognition extends beyond wayfinding to creating custom conversation agents trained on specific museums data that can respond to user inquiries using text-to-speech technology.

However, the effectiveness of voice recognition depends on the quality of the recognition engine. The Microsoft Speech Platform, upon which Intuiface Speech Recognition is based, may not be suitable for all visitors, particularly non-native English speakers or in noisy environments. Therefore, it may be beneficial for museums to explore other alternatives, such as OpenAI’s Whisper, to improve the accuracy and reliability of voice recognition technology.

Download the Accessible Interactive AI Kiosk demo.

Please note that to use this XP and customize it, you will need to sign up for the OpenAI API platform and obtain your own secret API key. Once you have your API key, simply switch to Edit mode in Composer, locate the Excel database file called XLS_PromptMaker under Interface Assets, and paste your API key into the appropriate field.

If you enjoyed this topic, check out also the AI-Powered Interactive Kiosk article.